Lick-Wilmerding High School has initiated a schoolwide effort to reevaluate how Large Language Models (LLMs) powered by artificial intelligence (AI)—such as ChatGPT by OpenAI, Gemini by Google and Claude by Anthropic—should be regulated for students and faculty. Co-led by Head of School Raj Mundra and the Dean of Technology Charlotte Gjedsted, the newly established AI Task Force will develop policies and guidelines to ensure that AI serves as a tool for learning, without undermining academic integrity.

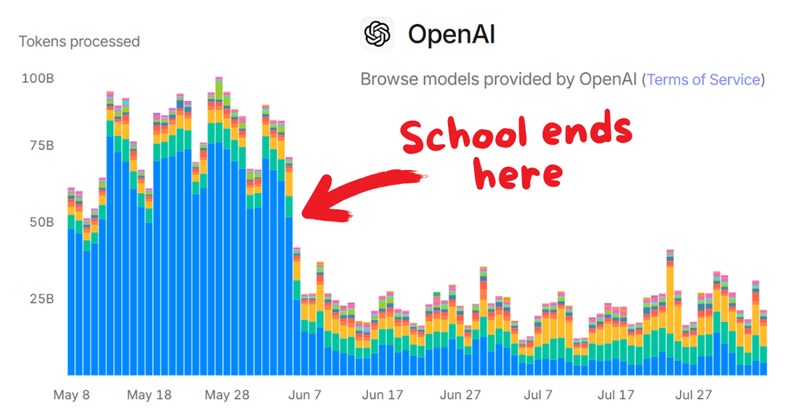

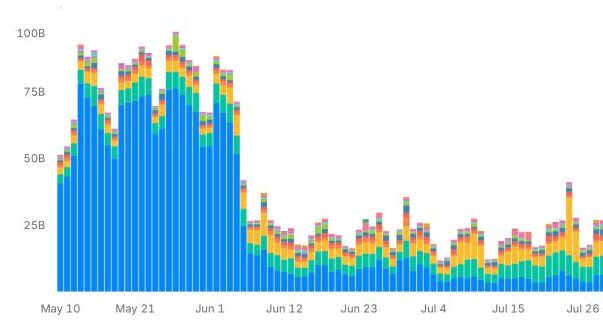

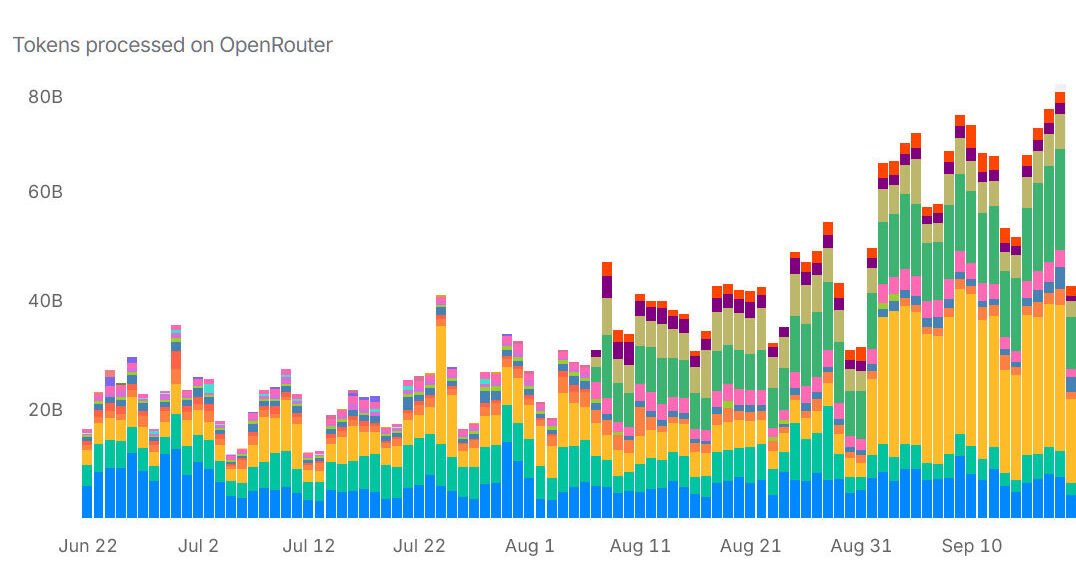

In May, ChatGPT users generated an average of 79.6 billion tokens (fragments of data) per day, according to OpenRouter’s data. That number dropped to 36.7 billion in June, when most schools let out for the summer break. The correlation is clear; generative AI has become deeply ingrained in academic settings.

ChatGPT, among other LLMs, can summarize texts, solve coding problems and even simulate tutors in most subjects—all in seconds. As their influence grows, educators nationwide are asking the same question LWHS is now tackling: how can schools embrace AI’s potential without compromising student learning?

Formed at the start of the school year, the task force includes faculty from multiple departments, student representatives and administrators. Together, they are tasked with assessing how AI is already being used on campus and drafting policies that align with LWHS’s academic values. The committee’s work is expected to influence everything from The Tiger Handbook to department curricula and how teachers design assignments. The leaders of the task force are currently set on revamping school policy to incorporate AI-specific rules.

“The first goal would be to draft guidelines and guardrails, a policy around Lick’s approach to AI usage to make it consistent,” Gjedsted said.

Mundra highlighted the importance of evolving alongside the LLMs and staying aware of AI’s use in the classroom. “AI should be an augmentation tool, not a replacement for learning,” he said. “It’s about creating a consistent, thoughtful framework… one that supports both kids and faculty in using AI meaningfully.”

One of the task force’s top priorities is conducting surveys and open discussions with students and faculty to assess how AI is currently being used across grade levels and subjects. They are also differentiating policies by department and providing professional development training so faculty and students can understand how to integrate AI responsibly.

“Departments should have autonomy to say what assignments you can use AI for and what assignments you cannot,” Gjedsted noted.

By the end of the fall semester, initial progress should be visible, with a full framework expected by year’s end. That framework is likely to include updates to The Tiger Handbook and department-level guidelines.

“My hope is that by the start of the next academic year, we’ll have a formalized policy that is responsive to and reflective of our community and how our community uses and feels about AI,” Gjedsted said.

Mundra agreed, emphasizing the long-term plan to utilize AI effectively in schools. He said, “AI is not a fad. It’s here, and it’s here to stay.”